Alpha Divergence

- Rana Basheer

- Apr 21, 2011

- 1 min read

Updated: Apr 2, 2021

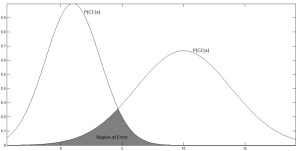

On several occasions you want to classify an observation x into one of two categories

Depending on which ever probability is higher we classify x as either being in

However, there is a non-zero probability that x might belong to

Bayes Error

Larger the area under this region there is a greater chance for misclassification. The area under this error region is given by

Since minimum function is quite difficult to deal with it is replaced by a smooth power function as

Hence the upper bound for the area is given by

The

Comments